Give Agents Autonomy. Give Humans Reason to Trust.

Economics tells you where agents are viable. It does not tell you how to make them trustworthy.

The economic frame establishes the foundation: honest baselines, explicit failure models, outcome-based measurement. But economic viability is a necessary condition, not a sufficient one. An organization can know that automating a process saves money and still refuse to do it — because no one trusts the agent to execute it correctly.

That gap — between economic justification and organizational willingness — is not an emotional problem. It is a structural one. And the structure that closes it is not control. It is trust.

Control Is the Wrong Frame

Most conversations about AI agents focus on control: how to constrain them, monitor them, limit what they can do. Control is the wrong frame. Control creates bottlenecks. Control means every agent action requires a human in the loop, which defeats the purpose of autonomy.

The result is a binary that serves no one. Either agents are micromanaged — requiring approval for every action, producing no efficiency gain — or they run unsupervised with no guardrails, producing anxiety that eventually kills the initiative.

The real question is not how to control agents. It is how to give agents enough autonomy to be useful while giving humans enough visibility to be confident.

Who Defines the Boundaries

Before the mechanism, the ownership question. Most teams get this wrong by default: they let developers define the boundaries of agent behavior. But the person who knows when a refund requires manager approval is not the engineer — it is the operations lead. The person who knows which compliance checks are non-negotiable is not the platform team — it is the risk manager.

Guardrails must be written by the people who own the domain, in language they already use. This is not a preference. It is a structural requirement. If the people who understand the process cannot read and modify the boundaries, the boundaries will drift from reality — and trust will erode regardless of the mechanism.

SOPs as Trust Contracts

Here is the structural shift: SOPs are not process documents. They are trust contracts.

When an SOP uses clear requirement levels — MUST, SHOULD, MAY — it encodes a dual-purpose agreement between humans and agents.

The agent reads the SOP as an operating framework:

- MUST defines hard boundaries. The agent does not cross these, ever.

- SHOULD defines the expected approach. The agent follows it by default but adapts if context demands it.

- MAY defines full autonomy. The agent decides how.

The human reads the same SOP as a trust contract:

- MUST means this always happens, regardless of circumstance.

- SHOULD means the default behavior is known, and deviations will be visible.

- MAY means the decision has been delegated. The human trusts the outcome.

Same document. Two audiences. The agent reads it as a framework that defines where it has freedom. The human reads it as a contract that defines what they can rely on.

Governing AI agents is less like managing a factory floor and more like how a board of directors interacts with a CEO: set strategic direction, define decision-making boundaries, maintain oversight — but do not prescribe every step.

Autonomy as a Dial

As we explored in the Economics for Agentic AI guide, organizations are shifting from upfront technology investments to pay-per-outcome models. This only works when agents can act autonomously — and when humans can trust the outcomes without watching every step.

MUST/SHOULD/MAY makes autonomy a dial, not a switch. Start with more MUST steps and fewer MAY steps. As trust builds — as the agent demonstrates reliability, as the execution data confirms consistency — convert SHOULD steps to MAY. The agent gains autonomy incrementally. The human’s trust framework stays intact throughout.

This is not a one-time calibration. Risk tolerance shifts as processes mature, as agents prove reliability, as the business context changes. The guardrails evolve with it. The trust contract is living architecture, not a static document.

The question is not how to control AI agents. It is how to give agents the autonomy to act — and give humans the framework to rely on.

From Trust to Authority

The trust contract solves the bilateral problem: agents know their boundaries, humans know their guarantees. But it raises a structural question that trust alone cannot answer.

When three teams write three SOPs for three agents that touch the same process, each trust contract may be internally coherent. The problem is not trust. It is authority — who granted those decision rights, and whether they are consistent across the organization.

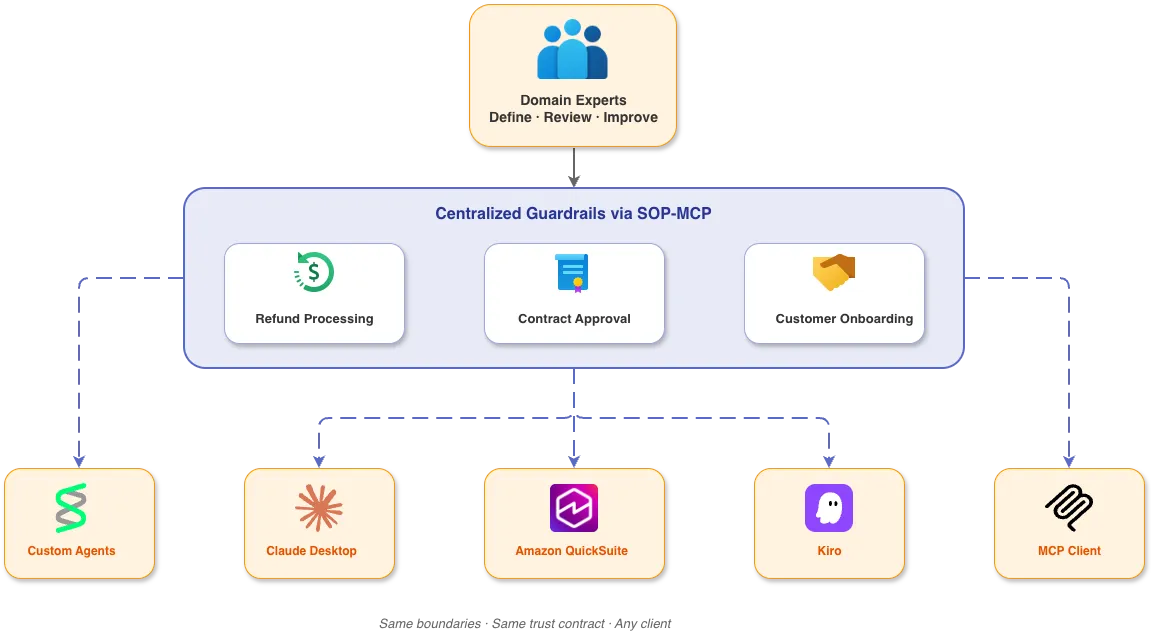

With SOP-MCP, agents can consume an SOP all at once or execute it step by step — and the same guardrails are available to any client that speaks MCP. A custom-built agent, Amazon QuickSuite, Claude Desktop, Kiro, Cursor — same boundaries, same trust contract, regardless of where the work happens.

Trust tells you how to calibrate autonomy for a single process. Authority tells you how to ensure that calibration is consistent, legitimate, and enforceable across the organization. That is a different problem — and it requires a different structure.